Batch System

Introduction

The batch system is a load distribution implementation that ensures convenient and fair use of a shared resource. Submitting jobs to a batch system allows a user to reserve specific resources with minimal interference to other users. All users are required to submit resource-intensive processing to the compute nodes through the batch system - attempting to circumvent the batch system is not allowed.

On ACES, Slurm is the batch system that provides job management.

Using Drona Composer to create and submit Jobs

The following sections will discuss the batch system in detail and how to manually create, submit, and monitor batch jobs, but first, we will show a more user-friendly way to create and submit batch jobs.

Drona Workflow Engine, created by HPRC, will guide the user to provide required information, analyze the input for inconsistencies, generate a Slurm batch job, and submit the generated batch script on the user's behalf.

Drona Composer provides a 100% graphical interface to generate and submit Generic jobs without the need to write a Slurm script or even be aware of Slurm syntax and Generic internals. Drona guides you in providing Generic specific information and generates and submits a Slurm job on your behalf.

Accesing Drona Composer

Drona is available on all HPRC Portals. Once you log in to the portal of your choice, select Drona Composer from the Jobs tab. This will open a new window showing the Drona composer interface.

Drona Environments

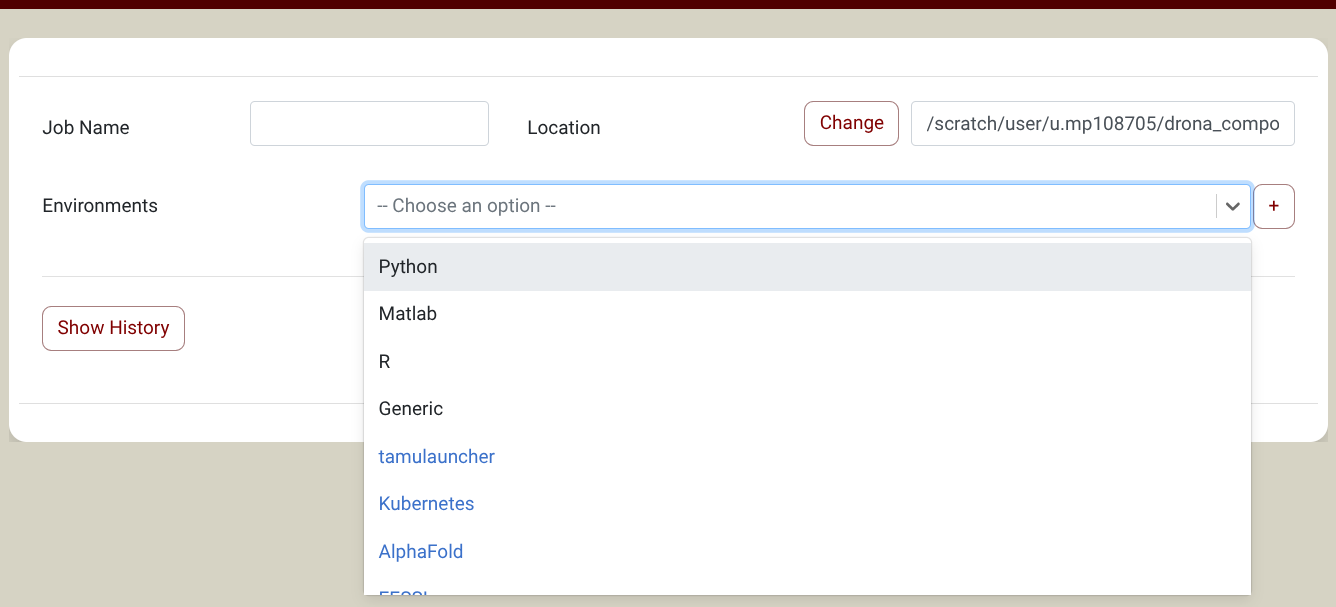

You can find the Generic environment in the Environment Dropdown. The image below shows a screenshot of the Drona composer interface with a dropdown menu with all available environments. NOTE: if you don't see the Generic environment, you need to import it first. Just click on the + sign next to the environments dropdown and select the Generic environment in the popup window. You only need to do this once. See the import section for more information.

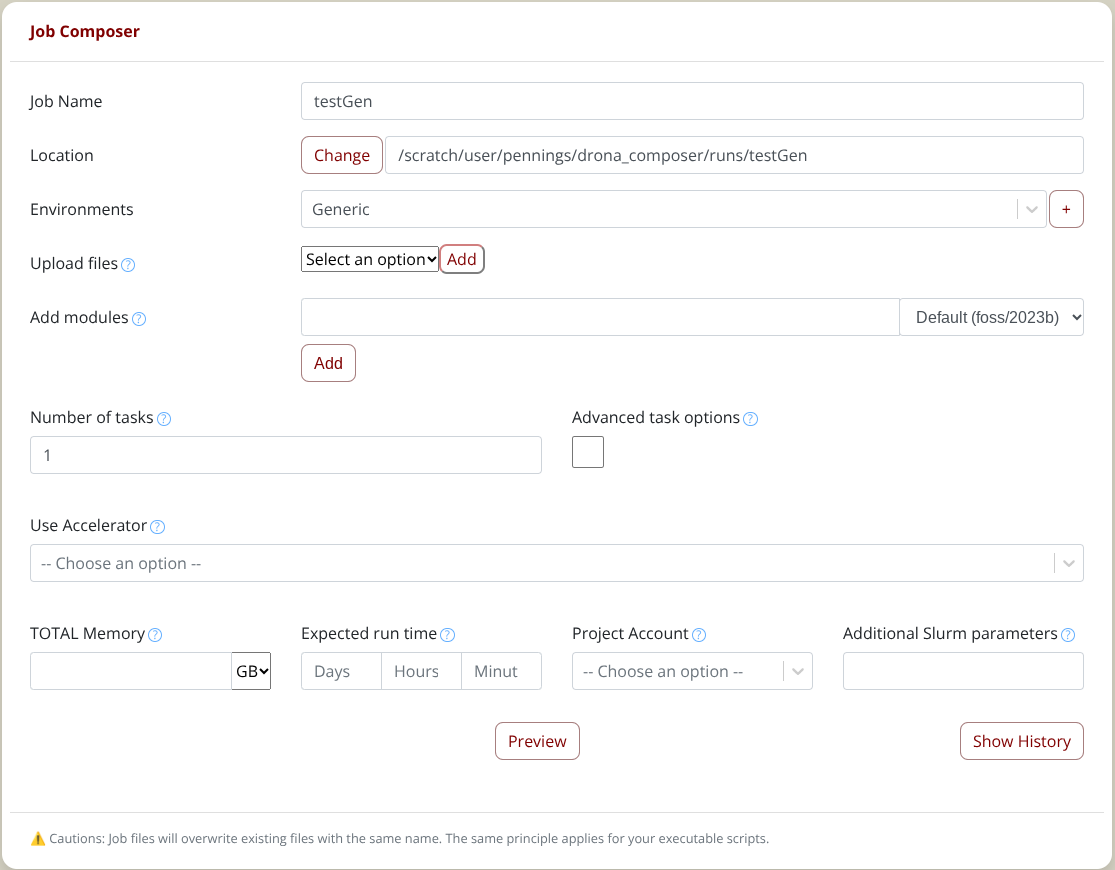

Once you select the Generic environment, the form will expand with additional fields to guide you in providing all the relevant information. The screenshot below shows the extra fields for the Generic environment.

Once you have filled in all the fields, click the "Generate" button. This will show a fully editable preview screen with the generated job scripts.

In the preview window you will enter all the commands you want to execute in the batch script.

To submit the job, click on the submit button, and Drona Composer will submit the generated job on your behalf.

For detailed information about Drona composer, checkout the Drona Composer Guide

Building Job Files

While not the only method of submitted programs to be executed, job files fulfill the needs of most users.

The general idea behind job files follows:

- Request resources

- Add your commands and/or scripts to run

- Submit the job to the batch system

In a job file, resource specification options are preceded by a script directive. For each batch system, this directive is different. On ACES (Slurm) this directive is #SBATCH. For every line of resource specifications, this directive must be the first text of the line, and all specifications must come before any executable lines. An example of a resource specification is given below:

#SBATCH --jobname=MyExample #Set the job name to "MyExample"

Note: Comments in a job file also begin with a # but Slurm recognizes #SBATCH as a directive.

A list of the most commonly used and important options for these job files are given in the following section.

Basic Job Specifications

Several of the most important options are described below. These basic options are typically all that is needed to run a job on ACES.

| Specification | Option | Example | Example-Purpose |

|---|---|---|---|

| Wall Clock Limit | --time=[hh:mm:ss] | --time=05:00:00 | Set wall clock limit to 5 hours 00 min |

| Job Name | --job-name=[SomeText] | --job-name=mpiJob | Set the job name to "mpiJob" |

| Total Task/Core Count | --ntasks=[#] | --ntasks=96 | Request 96 tasks/cores total |

| Tasks per Node | --ntasks-per-node=# | --ntasks-per-node=48 | Request exactly (or max) of 48 tasks per node |

| Memory Per Node | --mem=value[K|M|G|T] | --mem=240G | Request 240 GB per node |

| Combined stdout/stderr | --output=[OutputName].%j | --output=mpiOut.%j | Collect stdout/err in mpiOut.[JobID] |

It should be noted that Slurm divides processing resources as such: Nodes -> Cores/CPUs -> Tasks

A user may change the number of tasks per core. For the purposes of this guide, each core will be associated with exactly a single task.

Optional Job Specifications

A variety of optional specifications are available to customize your job. The table below lists the specifications which are most useful for users of ACES.

Batch specifications for ACES comming soon.

Alternative Specifications

The job options within the above sections specify resources with the following method:

- Cores and CPUs are equivalent

- 1 Task per 1 CPU desired

- You specify: desired number of tasks (equals number of CPUs)

- You specify: desired number of tasks per node (equal or less than the total number cores per compute node)

- You get: total nodes equal to #ofCPUs/#ofTasksPerNodes

- You specify: desired Memory per node

Slurm allows users to specify resources in units of Tasks, CPUs, Sockets, and Nodes.

There are many overlapping settings and some settings may (quietly) overwrite the defaults of other settings. A good understanding of Slurm options is needed to correctly utilize these methods.

Specification |

Option |

Example |

Example-Purpose |

|---|---|---|---|

Node Count |

--nodes=[min[-max]] |

--nodes=4 |

Spread all tasks/cores across 4 nodes |

CPUs per Task |

--cpus-per-task=# |

--cpus-per-task=4 |

Require 4 CPUs per task (default: 1) |

Memory per CPU |

--mem-per-cpu=MB |

--mem-per-cpu=2000 |

Request 2000 MB per CPU |

Memory per Node (All, Multi) |

--mem=0 |

Request the least-max available memory for any node across all nodes |

|

Tasks per Socket |

--ntasks-per-socket=# |

--ntasks-per-socket=6 |

Request max of 6 tasks per socket |

Sockets per Node |

--sockets-per-node=# |

--sockets-per-node=2 |

Restrict to nodes with at least 2 sockets |

If you want to make resource requests in an alternative format, you are free to do so. Our ability to support alternative resource request formats may be limited.

Environment Variables

All the nodes enlisted for the execution of a job carry most of the environment variables the login process created: HOME, SCRATCH, PWD, PATH, USER, etc. In addition, Slurm defines new ones in the environment of an executing job. Below is a list of most commonly used environment variables.

| Variable | Usage | Description |

|---|---|---|

| Job ID | $SLURM_JOBID | Batch job ID assigned by Slurm. |

| Job Name | $SLURM_JOB_NAME | The name of the Job. |

| Queue | $SLURM_JOB_PARTITION | The name of the queue the job is dispatched from. |

| Submit Directory | $SLURM_SUBMIT_DIR | The directory the job was submitted from. |

| Temporary Directory | $TMPDIR | This is a directory assigned locally on the compute node for the job located at /tmp/job.$SLURM_JOBID. Use of $TMPDIR is recommended for jobs that use many small temporary files. |

Basic Slurm Environment Variables

Note: To see all relevant Slurm environment variables for a job, add the following line to the executable section of a job file and submit that job. All the variables will be printed in the output file.

env | grep SLURM

Executable Commands

After the resource specification section of a job file comes the executable section. This executable section contains all the necessary UNIX, Linux, and program commands that will be run in the job. Some commands that may go in this section include, but are not limited to:

- Changing directories

- Loading, unloading, and listing modules

- Launching software

An example of a possible executable section is below:

cd $SCRATCH # Change current directory to /scratch/user/[username]/

ml purge # Purge all modules

ml intel/2022a # Load the intel/2022a module

ml # List all currently loaded modules

./myProgram.o # Run "myProgram.o"

For information on the module system or specific software, visit our Modules page and our Software page.

SU Charges for GPUs

When you run jobs on compute nodes, you are charged SUs. Generally 1 SU = 1 hour on 1 core. Using GPUs will have a higher cost, however. Refer to the Account Management System page for the SU rates for GPUs or other accelerators on each cluster.

Job Submission

Once you have your job script ready, it is time to submit the job. You can submit your job to the Slurm batch scheduler using the sbatch command. For example, suppose you you created a batch file named MyJob.slurm, the command to submit the job will as follows:

[username@aces ~]$ sbatch MyJob.slurm

Submitted batch job 3606

Job Monitoring and Control Commands

After a job has been submitted, you may want to check on its progress or cancel it. Below is a list of the most used job monitoring and control commands for jobs on ACES.

Function |

Command |

Example |

|---|---|---|

Submit a job |

sbatch [script_file] |

sbatch FileName.job |

Cancel/Kill a job |

scancel [job_id] |

scancel 101204 |

Check status of a single job |

squeue --job [job_id] |

squeue --job 101204 |

Check status of all |

squeue -u [user_name] |

squeue -u User1 |

Check CPU and memory efficiency for a job |

seff [job_id] |

seff 101204 |

Here is an example of the information that the seff command provides for a completed job:

% seff 12345678

Job ID: 12345678

Cluster: ACES

User/Group: username/groupname

State: COMPLETED (exit code 0)

Nodes: 16

Cores per node: 28

CPU Utilized: 1-17:05:54

CPU Efficiency: 94.63% of 1-19:25:52 core-walltime

Job Wall-clock time: 00:05:49

Memory Utilized: 310.96 GB (estimated maximum)

Memory Efficiency: 34.70% of 896.00 GB (56.00 GB/node)

Job Examples

Several examples of Slurm job files for ACES are listed below.

NOTE: Job examples are NOT lists of commands, but are a template of the contents of a job file. These examples should be pasted into a text editor and submitted as a job to be tested, not entered as commands line by line.

There are several optional parameters available for jobs on ACES. In the examples below, they are commented out/ignored via ##. If you wish to include these values as parameters for your jobs, please change it to a singular # and adjust the parameter value accordingly.

Example Job 1: A serial job (single core, single node)

#!/bin/bash

##NECESSARY JOB SPECIFICATIONS

#SBATCH --job-name=JobExample1 #Set the job name to "JobExample1"

#SBATCH --time=01:30:00 #Set the wall clock limit to 1hr and 30min

#SBATCH --ntasks=1 #Request 1 task

#SBATCH --mem=5000M #Request 5000MB (5GB) per node

#SBATCH --output=Example1Out.%j #Send stdout/err to "Example1Out.[jobID]"

##OPTIONAL JOB SPECIFICATIONS

##SBATCH --account=123456 #Set billing account to 123456

##SBATCH --mail-type=ALL #Send email on all job events

##SBATCH --mail-user=email_address #Send all emails to email_address

#First Executable Line

Example Job 2: A multi core, single node job

#!/bin/bash

##NECESSARY JOB SPECIFICATIONS

#SBATCH --job-name=JobExample2 #Set the job name to "JobExample2"

#SBATCH --time=6:30:00 #Set the wall clock limit to 6hr and 30min

#SBATCH --nodes=1 #Request 1 node

#SBATCH --ntasks-per-node=96 #Request 96 tasks/cores per node

#SBATCH --mem=488G #Request 488G (488GB) per node

#SBATCH --output=Example_SNMC_CPU.%j #Redirect stdout/err to file

#SBATCH --partition=cpu #Specify partition to submit job to

##OPTIONAL JOB SPECIFICATIONS

##SBATCH --account=123456 #Set billing account to 123456

##SBATCH --mail-type=ALL #Send email on all job events

##SBATCH --mail-user=email_address #Send all emails to email_address

#First Executable Line

Example Job 3: A multi core, multi node job

#!/bin/bash

##NECESSARY JOB SPECIFICATIONS

#SBATCH --job-name=Example_MNMC_CPU #Set the job name to Example_MNMC_CPU

#SBATCH --time=01:30:00 #Set the wall clock limit to 1hr 30min

#SBATCH --nodes=2 #Request 2 nodes

#SBATCH --ntasks-per-node=96 #Request 64 tasks/cores per node

#SBATCH --mem=488G #Request 488G (488GB) per node

#SBATCH --output=Example_MNMC_CPU.%j #Redirect stdout/err to file

#SBATCH --partition=cpu #Specify partition to submit job to

##OPTIONAL JOB SPECIFICATIONS

##SBATCH --account=123456 #Set billing account to 123456

##SBATCH --mail-type=ALL #Send email on all job events

##SBATCH --mail-user=email_address #Send all emails to email_address

#First Executable Line

Example Job 4: A serial GPU job (single node, single core)

#!/bin/bash

##NECESSARY JOB SPECIFICATIONS

#SBATCH --job-name=Example_SNSC_GPU #Set the job name to Example_SNSC_GPU

#SBATCH --time=01:30:00 #Set the wall clock limit to 1hr 30min

#SBATCH --ntasks=1 #Request 1 task

#SBATCH --mem=488G #Request 488G (488GB) per node

#SBATCH --output=Example_SNSC_GPU.%j #Redirect stdout/err to file

#SBATCH --partition=gpu #Specify partition to submit job to

#SBATCH --gres=gpu:h100:1 #Specify GPU(s) per node, 1 H100 GPUs

##OPTIONAL JOB SPECIFICATIONS

##SBATCH --account=123456

#Set billing account to 123456

##SBATCH --mail-type=ALL #Send email on all job events

##SBATCH --mail-user=email_address #Send all emails to email_address

#First Executable Line

Example Job 5: A serial GPU job (single node, multiple core)

#!/bin/bash

##NECESSARY JOB SPECIFICATIONS

#SBATCH --job-name=Example_SNMC_GPU #Set the job name to Example_SNMC_GPU

#SBATCH --time=01:30:00 #Set the wall clock limit to 1hr 30min

#SBATCH --nodes=1 #Request 1 nodes

#SBATCH --ntasks-per-node=48 #Request 48 tasks/cores per node

#SBATCH --mem=488G #Request 488G (488GB) per node

#SBATCH --output=Example_SNMC_GPU.%j #Redirect stdout/err to file

#SBATCH --partition=gpu #Specify partition to submit job to

#SBATCH --gres=gpu:h100:4 #Specify GPU(s) per node, 4 H100 GPUs

##OPTIONAL JOB SPECIFICATIONS

##SBATCH --account=123456 #Set billing account to 123456

##SBATCH --mail-type=ALL #Send email on all job events

##SBATCH --mail-user=email_address #Send all emails to email_address

#First Executable Line

Example Job 6: A parallel GPU job (multiple node, multiple core)

#!/bin/bash

##NECESSARY JOB SPECIFICATIONS`

#SBATCH --job-name=Example_MNMC_GPU #Set the job name to Example_MNMC_GPU

#SBATCH --time=01:30:00 #Set the wall clock limit to 1hr 30min

#SBATCH --nodes=2 #Request 2 nodes

#SBATCH --ntasks-per-node=48 #Request 48 tasks/cores per node

#SBATCH --mem=488G #Request 488G (488GB) per node

#SBATCH --output=Example_MNMC_GPU.%j #Redirect stdout/err to file

#SBATCH --partition=gpu #Specify partition to submit job to

#SBATCH --gres=gpu:h100:1 #Specify GPU(s) per node, 1 H100 gpu

##OPTIONAL JOB SPECIFICATIONS

##SBATCH --account=123456 #Set billing account to 123456

##SBATCH --mail-type=ALL #Send email on all job events

##SBATCH --mail-user=email_address #Send all emails to email_address

#First Executable Line

Batch Queues

Upon job submission, Slurm sends your jobs to appropriate batch queues. These are (software) service stations configured to control the scheduling and dispatch of jobs that have arrived in them. Batch queues are characterized by all sorts of parameters. Some of the most important are:

- The total number of jobs that can be concurrently running (number of run slots)

- The wall-clock time limit per job

- The type and number of nodes available for jobs

These settings control whether a job will remain idle in the queue or be dispatched quickly for execution.

The current queue structure is: (updated on June 30, 2025).

| Queue Name | Max Nodes per Job (Max Cores) | Max Devices | Max Duration | Max Running Jobs |

|---|---|---|---|---|

| cpu | 64 nodes (6,144 cores) | 0 | 3 days | 40 |

| gpu | 8 nodes (672 cores) | 32 | 2 days | 40 |

| gpu_debug | 1 node (96 cores) | 2 | 2 hours | 40 |

| pvc | 32 nodes (3072 cores) | 32 | 2 days | 40 |

| bittware | 2 node (96 cores) | 2 | 2 days | 40 |

| memverge | 1 node (96 cores) | 1 | 2 days | 40 |

| nextsilicon* | 1 node (96 cores) | 1 | 2 days | 40 |

* The nextsilicon queue is available upon request.

Checking queue usage

- The

sinfocommand can be used to get information on queues and their nodes.

PARTITION AVAIL TIMELIMIT JOB_SIZE NODES(A/I/O/T) CPUS(A/I/O/T)

cpu* up 3-00:00:00 1-64 52/0/2/54 4704/288/192/5184

gpu up 2-00:00:00 1-8 4/3/0/7 224/448/0/672

gpu_debug up 2:00:00 1 1/2/0/3 16/272/0/288

pvc up 2-00:00:00 1-32 12/18/2/32 288/2592/192/3072

bittware up 2-00:00:00 1 0/0/2/2 0/0/192/192

memverge up 2-00:00:00 1 0/7/3/10 0/672/288/960

nextsilicon up 2-00:00:00 1 0/2/0/2 0/192/0/192

Note: A/I/O/T stands for Active, Idle, Offline, and Total respectively.

Checking node usage

- The

pestatcommand can be used to generate a list of nodes and their corresponding information, including their CPU usage, and current jobs.

Hostname Partition Node Num_CPU CPUload Memsize Freemem Joblist

State Use/Tot (15min) (MB) (MB) JobID User ...

ac001 memverge idle 0 96 0.00 500000 111162 (null)

ac002 memverge idle 0 96 0.00 500000 111310 (null)

ac003 memverge idle 0 96 0.00 500000 111318 (null)

ac004 memverge idle 0 96 0.00 500000 111335 (null)

ac005 cpu* mix 92 96 39.65* 500000 356926 (null) 212838 userA (null) 212794

ac006 cpu* mix 92 96 40.35* 500000 270787 (null) 212789 userA (null) 212790 userA (null) 212671 userA (null)

ac007 cpu* mix 92 96 42.44* 500000 291258 (null) 212844 userA (null) 212839 userA (null)

ac008 cpu* mix 76 96 26.00* 500000 244169 (null) 212861 userA (null) 212825 userA (null) 212817 userA (null) 212793 userA

ac009 cpu* mix 92 96 40.22* 500000 341938 (null) 212859 userA (null) 212851 userA (null)

ac010 pvc mix 24 96 9.96* 500000 380280 gpu:pvc:4 216175 userB gpu:pvc=8 *

ac011 pvc mix 24 96 10.06* 500000 380319 gpu:pvc:4 216175 userB gpu:pvc=8 *

ac012 pvc mix 24 96 9.88* 500000 380099 gpu:pvc:4 216176 userB gpu:pvc=8 *

ac013 pvc mix 24 96 9.97* 500000 379063 gpu:pvc:4 216176 userB gpu:pvc=8 *

....

- To generate a list of the GPU nodes, their current configuration, and their current jobs:

Hostname Partition Node Num_CPU CPUload Memsize Freemem GRES/node Joblist

State Use/Tot (15min) (MB) (MB) JobID User GRES/job ...

....

ac026 pvc alloc 96 96 1.48* 500000 498854 gpu:pvc:6 216258 userA gpu:pvc=14

ac030 pvc alloc 96 96 1.35* 500000 498547 gpu:pvc:8 216258 userA gpu:pvc=14

ac034 pvc mix 24 96 10.11* 500000 381892 gpu:pvc:4 216249 userB gpu:pvc=8 *

ac039 pvc mix 24 96 9.96* 500000 381859 gpu:pvc:4 216249 userB gpu:pvc=8 *

ac041 gpu idle 0 96 0.00 500000 503071 gpu:h100:8(S:0)

ac045 gpu idle 0 96 0.00 500000 498032 gpu:h100:8(S:0)

ac049 gpu alloc 96 96 1.52* 500000 497272 gpu:h100:4(S:0) 216241 userC gpu:h100=4

ac050 pvc idle 0 96 0.00 500000 485762 gpu:pvc:2

ac051 pvc drain* 0 96 0.11 500000 512822 gpu:pvc:2

ac055 gpu alloc 96 96 1.59* 500000 503074 gpu:h100:4(S:0) 216240 userC gpu:h100=4

ac062 pvc idle 0 96 0.00 500000 510254 gpu:pvc:4

ac064 gpu mix 16 96 1.01* 500000 497500 gpu:a30:2(S:0) 216243 userD gpu:a30=1

ac065 gpu_debug idle 0 96 0.25 500000 476010 gpu:a30:2(S:0)

ac068 pvc idle 0 96 0.05 500000 509052 gpu:pvc:8

ac078 pvc mix 24 96 9.86* 500000 379652 gpu:pvc:4 216174 userE gpu:pvc=8 *

....

- The idle A30, H100, and PVC GPUs in various nodes can be listed using the gpuavail command.

CONFIGURATION

NODE NODE

TYPE COUNT

---------------------

gpu:pvc:4 17

gpu:pvc:2 11

gpu:h100:4 3

gpu:pvc:8 3

gpu:a30:2 2

gpu:h100:8 2

gpu:h100:2 1

gpu:pvc:6 1

AVAILABILITY

NODE GPU GPU GPUs CPUs GB MEM

NAME TYPE COUNT AVAIL AVAIL AVAIL

-------------------------------------------

ac023 pvc 4 4 96 488

ac024 pvc 8 8 96 488

ac026 pvc 6 6 96 488

ac030 pvc 8 8 96 488

ac034 pvc 4 4 96 488

ac039 pvc 4 4 96 488

ac050 pvc 2 2 96 488

ac062 pvc 4 4 96 488

ac064 a30 2 1 80 360

ac065 a30 2 2 96 488

ac068 pvc 8 8 96 488

ac082 pvc 2 2 96 488

ac083 pvc 2 2 96 488

ac086 pvc 2 2 96 488

ac087 pvc 2 2 96 488

ac094 pvc 2 2 96 488

ac095 pvc 2 2 96 488

ac097 pvc 2 2 96 488

ac098 h100 2 2 96 488

ac100 pvc 2 2 96 488

ac103 pvc 2 2 96 488

Checking bad nodes

- The

badnodescommand can be used to view a current list of bad nodes on the machine. - The following output is just an example output and users should run badnodes to see a current list.

REASON USER TIMESTAMP STATE NODELIST

testing DIMM B5 somebody 2023-07-01T12:31:22 drained* ac017

Not responding slurm 2023-07-19T12:10:47 down* ac001

Checkpointing

Checkpointing is the practice of creating a save state of a job so that, if interrupted, it can begin again without starting completely over. This technique is especially important for long jobs on the batch systems, because each batch queue has a maximum walltime limit.

A checkpointed job file is particularly useful for the gpu queue, which is limited to 4 days walltime due to its demand. There are many cases of jobs that require the use of gpus and must run longer than two days, such as training a machine learning algorithm.

Users can change their code to implement save states so that their code may restart automatically when cut off by the wall time limit. There are many different ways to checkpoint a job file depending on the software used, but it is almost always done at the application level. It is up to the user how frequently save states are made depending on what kind of fault tolerance is needed for the job, but in the case of the batch system, the exact time of the 'fault' is known. It's just the walltime limit of the queue. In this case, only one checkpoint need be created, right before the limit is reached. Many different resources are available for checkpointing techniques. Some examples for common software are listed below.

Advanced Documentation

This guide only covers the most commonly used options and useful commands.

For more information, check the man pages for individual commands or the Slurm Documentation.